Starting this month, three Nature journals—Nature Methods, Nature Biotechnology and Nature Machine Intelligence—will run a trial in partnership with Code Ocean to enable authors to share fully-functional and executable code accompanying their articles and to facilitate peer review of code by the reviewers.

Starting this month, three Nature journals—Nature Methods, Nature Biotechnology and Nature Machine Intelligence—will run a trial in partnership with Code Ocean to enable authors to share fully-functional and executable code accompanying their articles and to facilitate peer review of code by the reviewers.

This guest blog comes from Erika Pastrana, Executive Editor for the Nature Research Journals and Sowmya Swaminathan, Head of Editorial Policy and Research Integrity at Nature Research.

Increasing the reproducibility of scientific findings is a goal that all of us in the research enterprise share.

One path towards achieving this is to encourage authors to provide all relevant data and code associated with a published article. This enables others to re-run the analyses, reproduce the results and re-use the code and data to build on the work, advancing science further.

Since 2014 the Nature journals have required authors of studies with custom code or algorithms that are central to the conclusions to provide a “Code Availability” statement indicating whether and how the code or algorithm can be accessed, including any restrictions to access. In 2016, we adopted a policy of mandatory “data availability statements” on all Nature journal papers. The guiding principle is that these statements must provide enough information for readers to be able to reproduce the results and access the code and data for use in their own research.

A number of Nature Research journals have, for years, also peer reviewed code when it is central to the paper to ensure it is vetted scientifically, and provided the code as part of the published paper, typically in the supplementary information or via a link to a folder on GitHub (see this Nature Methods editorial from 2014). Despite our long-running efforts to publish code that is peer reviewed and useful, our platforms have not always been best suited to this task.

We know peer reviewing code is cumbersome as it requires authors to compile the code in a format that is accessible for others to check, and reviewers to download the code and data, set up the computational environment in their own computer and install the many dependencies that are often required to make it all work. To facilitate this process, we recently developed new guidelines for authors and a checklist to help during code submisison—but there are now tools available that go beyond checklists and PDFs.

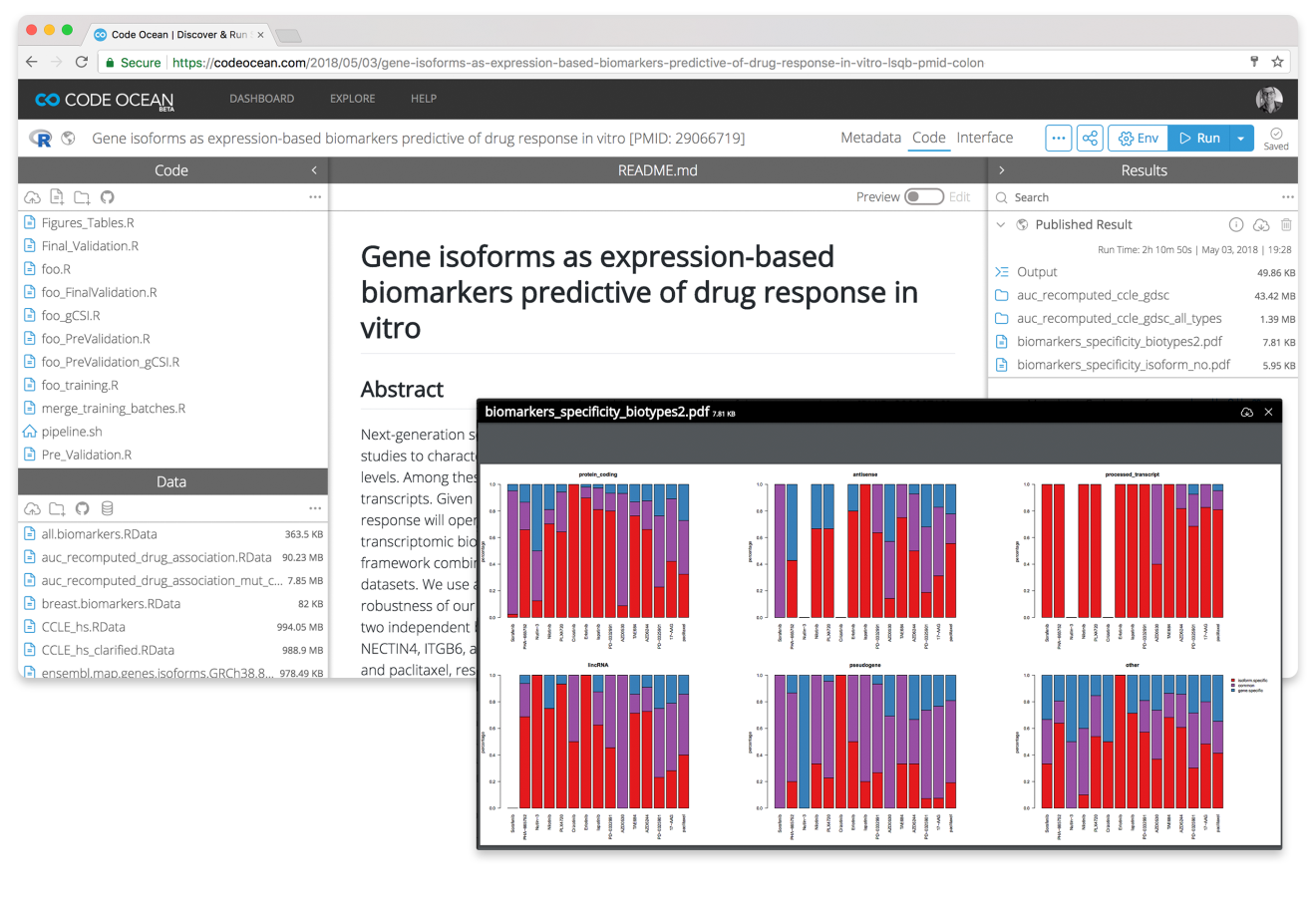

Code Ocean is a computational reproducibility platform that aims to make code more readily executable and discoverable. The platform, which is based on Docker, hosts the code and data in the necessary computational environment and allows users to re-run the analysis in the cloud and reproduce the results, bypassing the need to install the software.

The trial is optional for authors of papers undergoing code peer review at these selected journals. Reviewers will be offered as much runtime as they need to run the code and analyses (100 hours per month by default), and upon publication, the code and data will be assigned a digital object identifier (DOI) and cited in the article, enabling readers to access it freely via a link. Code Ocean, through CLOCKSS, will guarantee the preservation of the code, data, results, metadata and computational environment.

By partnering with Code Ocean, we hope to further facilitate compliance with our policies and practices, and to provide benefits to authors, reviewers and readers by improving the peer review experience and facilitating sharing of code that is reproducible and useful. We hope this functionality will also enhance our papers by linking to a platform where the results, code and data can be more easily verified, reproduced and re-used.

We will be attentively listening to the response in our community, and will be surveying all the authors and reviewers that participate in the trial to learn from their experience.