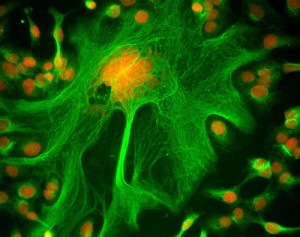

Our choice of Method of the Year in prior years has tended to be methods that generally didn’t even exist only a few years earlier but which had quickly bounded onto the scientific stage and attracted the attention of a large portion of the scientific community. Targeted proteomics, our choice for 2012, on the other hand has existed for years in scaled-down forms using methods based on antibodies. Western blotting, immunofluorescence, antibody arrays, etc. can all be used to detect and measure targeted subsets the proteins expressed in cells and tissues.

During this time the workhorse of proteomics, the mass spectrometer, has been used mostly for shotgun proteomics experiments in which the goal was to analyze all the proteins in a sample. But the means to use these machines for targeted detection of defined subsets of proteins and obtain more reproducible measurements than shotgun experiments can typically provide have been around for decades.

Shotgun methods have been mostly confined to specialist laboratories as many biologists have been intimidated by the complexity of implementing and analyzing these experiments properly. Targeted proteomics on the other hand offers a tantalizing opportunity to bring a sampling of the power of mass spectrometry to the wider community of biologists. The assays are simpler, easier to run and well suited to the hypothesis-driven experiments that are the mainstay of biological research.

The ubiquitous Western blot has long filled a central role or functioned as a crucial control in many research studies. Unfortunately performing a high-quality Western blot can feel a bit like roulette. Sometimes you get a fantastic looking blot with an accurate antibody but other times either the blot is blank, the bands may look like they ran through some carnival ride or it might suffer from any number of other problems. This might prompt people to either look for a goat to appease the Western blot gods or take unscientific liberties with the presentation of the data in order to make it look like they are believe it should. It also lessens the likelihood that important replicates are performed or reported.

Targeted mass spectrometry offers the possibility for thousands of labs to move away from, or supplement, Western blots; and improve the quality and quantity of their protein measurements. This is not as sexy as next-generation sequencing, super-resolution imaging or optogenetics, some of our prior choices of Method of the Year, but the potential for revolutionizing an arguably mundane but indispensable technique was compelling enough that it played no small role in our decision. Only time will tell what impact the method has and we eagerly look forward to the answer.