We published another double header yesterday, this time on the role of particular cell types in visual responses. Both studies describe the effect of optogenetically manipulating various interneuron classes in mouse visual cortex. The papers are Lee et al. from Yang Dan‘s lab and Wilson et al. from Mriganka Sur‘s labs. And in fact, both were preceded by Atallah et al. from Massimo Scanziani’s lab, which appeared in Neuron earlier this year. Which means a bonanza of data on the effects of activating parvalbumin-expressing interneurons, and also a bonanza of different conclusions about their exact role – everyone comes to slightly different conclusions.

Tag Archives: visual cortex

A tale of three papers

I wanted the title of this post to be “A tale of two one two three papers” but I couldn’t figure out how to get strikethroughs in the title field. And I thought “A tale of two, make that one, no make that two again, oops now three” might be a bit cumbersome. As promised, here’s another installment of the discussion of what happens when we receive conceptually related/overlapping papers. It starts with a paper that appeared just yesterday in Neuron by Kenichi Ohki and colleagues describing how mouse visual cortex neurons that developed from the same neural progenitor cell tend to be more similar functionally than those that did not.

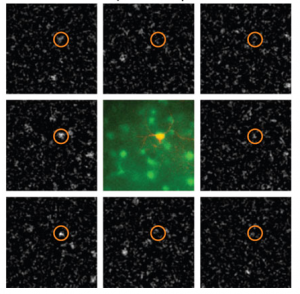

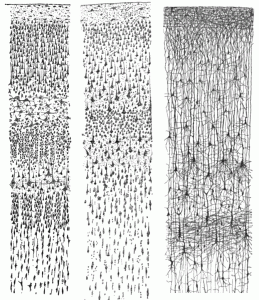

Why is this significant? First a little background. Cells in visual cortex are tuned to different aspects of visual stimuli, such as orientation or direction, and anatomically are organized quite specifically. Cells with similar preferences tend to cluster together and to be selectively connected with each other (though to differing degrees in different species), and this specificity may underlie some of the many computations required to turn photons of light hitting our eyes into comprehensible percepts. It’s been proposed that this clustering could start in early development; neurons born from the same neural progenitor migrate vertically to form columns of sibling neurons, and could be the basis for clusters of adult cells with similar properties. That link hasn’t been demonstrated experimentally until now, and Ohtsuki et al. provides some evidence in support of it.

Now, visual cortex aficionados among you may think this sounds a bit similar to Li et al., a paper by Yang Dan and colleagues that appeared a few months ago, and indeed it is. And you may also recall that THAT paper appeared alongside Yu et al. from Songhai Shi’s lab about the development of synapses between sibling neurons.

So here’s the story from the beginning (or rather, the beginning of our involvement with the manuscripts).

Layer magic and monkey business

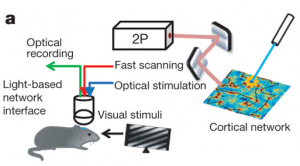

We’ve known for over a century that sensory cortex is arranged in distinct layers, each containing a different make up of neuronal types and projection patterns, but we don’t actually know that much about the actual computations performed in each layer. Today a paper from Massimo Scanziani’s lab takes a big step towards cracking the function of the bottom layer (layer 6) in mice. Layer 6 neurons project both to upper cortical layers and to the lateral geniculate nucleus in the thalamus, which itself is the primary input to cortex, and so are primed to play a large modulatory role. Using a monumental combination of optogenetics, intracellular recording, and behavioral testing, the paper convincingly makes the case that layer 6 controls the gain of visual responses of upper layer neurons (i.e. changes the size of their responses without altering their selectivity). Gain control is a fundamental computation in cortex, and has been invoked as a mechanism for attention, perception, spatial processing, and more. The cellular mechanism here is worked out in primary visual cortex, but it could potentially operate throughout layered cortex.

Telepathy? I think not

There is just something about neural decoding that captures the imagination. Scientists “reading out brain activity” to infer what someone was seeing or doing sounds like the stuff of science fiction. But in practice, with the right dataset and right computer algorithm, it can be done – providing the question you are trying to query the brain is simple enough. But no matter how simple the question, with every paper comes an orgy of stories in the mainstream press about how scientists can eavesdrop on your thoughts or even engage in electronic telepathy. Thereby infuriating scientists and science journalists in droves, sometimes detracting from some very cool work.

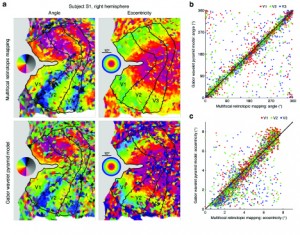

Today I’m going back a few years to a paper that typifies this effect, a study from Jack Gallant‘s lab about a model for decoding natural images from fMRI activity in early visual cortex.