After nine years in print, Nature Methods today published its first retraction; one that could have been prevented by cell line authentication. What does this mean for journal-mandated cell line testing?

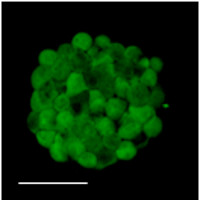

Two-photon fluorescence image of live primary gliomasphere from retracted manuscript.

Cells from the autofluorescent fraction could self renew clonogenically in vitro and were tumorigenic when transplanted into mouse brains, the authors reported, and in both cases performed better than non-autofluorescent cells from the rest of the culture or tissue. The origin of this autofluorescent signal was not understood at the time. The authors speculated it may have been related to the unique metabolism of the cancer-initiating cells.

It turns out that most of the primary gliomasphere lines (7 out of 10) were contaminated with HEK cells expressing GFP, leading to retraction of the paper. Using short-tandem-repeat (STR) profiling of two of the lines the authors determined that the contamination occurred over the course of culture in the lab: samples taken from early passages match the original tissue from which the lines were derived, but later passages no longer do so.

It is hardly surprising that the first retraction in Nature Methods is due to cell line contamination, a well acknowledged problem. A 2009 Editorial in Nature pointed to the disturbing results of cell testing by repositories which indicated that 18-36% of cultures were misidentified. It called on repositories to authenticate all of their lines, and for major funders to provide testing support to grantees. At that point funders could require cell line validation for investigators to retain funding, and Nature would require that all immortalized lines used in a paper were verified before publication. Unfortunately, it is now 2013 and we are still far from this goal.

But progress is being made. Community-based efforts are alerting researchers to this problem and providing resources to help them avoid being misled by erroneous results caused by cell line contamination. A 2012 Correspondence in Nature by John R. Masters on behalf of the International Cell Line Authentication Committee (ICLAC) pointed to the following resources available to researchers:

- An STR procedure available from the American National Standards Institute webstore.

- Two helpful PDF documents from the ICLAC with information and tips on authentication including ‘Advice to Scientists’ and an ‘Authentication SOP’.

- A continuously curated list of more than 400 misidentified cell lines (Database of Cross-Contaminated or Misidentified Cell Lines, Version 7.1)

Please go to the ICLAC website for the most recent version of each of these documents.

Meanwhile in early 2013, at the publication end of the process, the Nature journals published coordinated editorials announcing a reproducibility initiative and stating that “…authors will need to […] provide precise characterization of key reagents that may be subject to biological variability, such as cell lines and antibodies.” In practice, the Nature journals are currently requiring all authors to state whether or not testing was done but are only requiring testing in cases where it makes particular sense.

Advocates for mandatory testing have cogent arguments for a uniform mandatory testing policy. First, it would avoid sending a confusing message; second, researchers can’t be certain that cell identity or mycoplasma contamination aren’t affecting results; and finally, continued publication of inaccurate species and tissue designations of misidentified cell lines continues to propagate misinformation.

In the work described in the retracted 2010 manuscript from Radovanovic and colleagues mandatory testing would certainly have been beneficial. However, for probably the majority of work published by Nature Methods there is no question that testing would have no impact on the reported results. For example, in 2011 and 2012 we published at least 17 manuscripts reporting new fluorescence microscopy methods and using imaging data from cell lines to assess the performance of the techniques in measuring fundamental cell properties such as the appearance and width of actin or microtubule filaments, membrane vesicles or other universal cellular structures. Cell line identity and even mycoplasma contamination would not impact the efficacy or conclusions of these measurements. This same situation exists for the validation and testing of many methods in other research disciplines such as proteomics, genomics and biophysics.

Even if these labs should be doing cell validation and mycoplasma testing as a matter of course as part of proper cell culture procedure, mandating that all these studies include such testing as a requirement for publication is unjustified.

But clearly even our most recent efforts at improving compliance with good testing practice will not be sufficient to eliminate cell contamination as a problem in work published in Nature journals. A possible solution may be to require testing by default but authors would be permitted to argue why, in their case, testing is clearly unnecessary. Editors (possibly with reviewer input) would be the final arbiters and would need to ensure that although the lines must be named and sourced, no species or tissue identifiers should be included in the manuscript in the absence of proper validation.

Technology development labs or others that only use cell lines for purposes distinct from biological investigation could continue to avoid testing. But any lab that might potentially use their cell lines to obtain biological results would know that they should institute a proper testing regimen or risk their work not being publishable in a Nature journal.

At this point this is only an idea based on our experience at Nature Methods. We encourage the community to comment and let us know what they think.