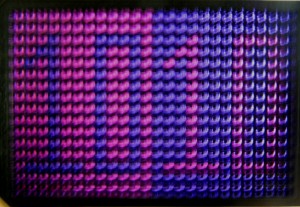

The optogenetic manipulation of cellular properties has not only revolutionized neuroscience, but this technology can also be applied to the manipulation of signaling pathways, transcription or other processes in non-neuronal cells. Here, we highlight some of the papers we have published on the neuroscience side of optogenetics.

Optogenetic tools

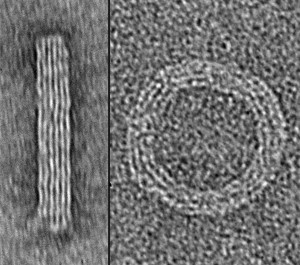

2014 has been an exciting year for us with the publication of new optogenetic tools. Klapoetke and Boyden developed Chrimson and Chronos, two channelrhodopsins that they discovered in a screen of algal transcriptomes. Chrimson is more red-shifted than previously known channelrhodopsins while Chronos has faster kinetics. Hochbaum and Cohen described another algal channelrhodopsin called CheRiff, which is highly sensitive to blue light stimulation, making it compatible with red-shifted voltage sensors.

Previously, we published papers describing modifications to optogenetic tools. For example, Prakash and Deisseroth tailored opsin with custom properties. To ensure stoichiometric expression of optogenetic activators and/or inhibitors, Kleinlogel and Bamberg simply and elegantly fused the two proteins into a single chain. Depending on the two partners, this marriage can lead to synergisms or bidirectional effects. Finally, Mattis and Deisseroth undertook a comprehensive characterization of available tools.

Optogenetic applications

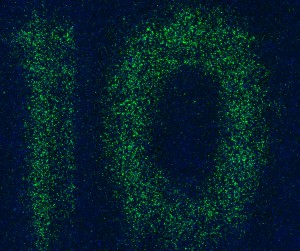

Since the initial description of Channelrhodopsin2 (ChR2) as an efficient tool to evoke neural activity in a light-dependent manner, we have seen a flurry of papers applying ChR2 for a variety of questions in neuroscience. For instance, Zhang and Oertner combined this tool with two-photon calcium imaging in rat slices to study synaptic plasticity. Liewald and Gottschalk applied the same methodology to analyze synaptic function in freely moving C. elegans.

ChR2 can also be used to map the function of brains regions as Ayling and Murphy demonstrated by evoking activity in limb muscles via light stimulation in the motor cortex of ChR2 transgenic mice. Similarly, Guo and Ramanathan mapped neural circuitry in C. elegans by combining ChR2-mediated neural activation with imaging of a genetically encoded calcium sensor in downstream neurons. To facilitate circuit mapping in mice, Zhao and Feng generated mouse lines that express ChR2 in GABAergic, cholinergic, serotonergic or parvalbumin-expressing neurons.

While ChR2 is a very popular tool in optogenetics, other family members can do the job as well. C1V1T is a fusion of two different opsins and is particularly useful when applying two-photon excitation, as shown by Packer and Yuste. ReaChR is activated by red light and thus especially useful in vivo. Inagaki and Anderson studied courtship behavior in Drosophila with this tool.

Method of the Year

We celebrated the impact of optogenetics by recognizing the technology as our Method of the Year 2010. We marked the occasion with the publication of special Commentaries on the subjects. Deisseroth discussed the past, present and future of optogenetics. Hegemann and Möglich deliberate on the exploration of new optogenetic tools. And Peron and Svoboda illuminated us on the precise delivery of optogenetic stimulation. In addition, our News Feature recounted the stories behind the “Light tools”.

If we have sparked your interest, the mentioned papers are listed below.

We are excited to hear about the upcoming developments in optogenetics from you.

Nathan C Klapoetke, Yasunobu Murata, Sung Soo Kim, Stefan R Pulver, Amanda Birdsey-Benson, Yong Ku Cho, Tania K Morimoto, Amy S Chuong, Eric J Carpenter, Zhijian Tian, Jun Wang, Yinlong Xie, Zhixiang Yan, Yong Zhang, Brian Y Chow, Barbara Surek, Michael Melkonian, Vivek Jayaraman, Martha Constantine-Paton, Gane Ka-Shu Wong & Edward S Boyden

Independent optical excitation of distinct neural populations

Nature Methods 11, 338–346 (2014) doi:10.1038/nmeth.2836

Daniel R Hochbaum, Yongxin Zhao, Samouil L Farhi, Nathan Klapoetke, Christopher A Werley, Vikrant Kapoor, Peng Zou, Joel M Kralj, Dougal Maclaurin, Niklas Smedemark-Margulies, Jessica L Saulnier, Gabriella L Boulting, Christoph Straub, Yong Ku Cho, Michael Melkonian, Gane Ka-Shu Wong, D Jed Harrison, Venkatesh N Murthy, Bernardo L Sabatini, Edward S Boyden, Robert E Campbell & Adam E Cohen

All-optical electrophysiology in mammalian neurons using engineered microbial rhodopsins

Nature Methods 11, 825–833 (2014) doi:10.1038/nmeth.3000

Rohit Prakash, Ofer Yizhar, Benjamin Grewe, Charu Ramakrishnan, Nancy Wang, Inbal Goshen, Adam M Packer, Darcy S Peterka, Rafael Yuste, Mark J Schnitzer & Karl Deisseroth

Two-photon optogenetic toolbox for fast inhibition, excitation and bistable modulation

Nature Methods 9, 1171–1179 (2012) doi:10.1038/nmeth.2215

Sonja Kleinlogel, Ulrich Terpitz, Barbara Legrum, Deniz Gökbuget, Edward S Boyden, Christian Bamann, Phillip G Wood & Ernst Bamberg

A gene-fusion strategy for stoichiometric and co-localized expression of light-gated membrane proteins

Nature Methods 8, 1083–1088 (2011) doi:10.1038/nmeth.1766

Joanna Mattis, Kay M Tye, Emily A Ferenczi, Charu Ramakrishnan, Daniel J O’Shea, Rohit Prakash, Lisa A Gunaydin, Minsuk Hyun, Lief E Fenno, Viviana Gradinaru, Ofer Yizhar & Karl Deisseroth

Principles for applying optogenetic tools derived from direct comparative analysis of microbial opsins

Nature Methods 9, 159–172 (2012) doi:10.1038/nmeth.1808

Yan-Ping Zhang & Thomas G Oertner

Optical induction of synaptic plasticity using a light-sensitive channel

Nature Methods 4, 139 – 141 (2006) doi:10.1038/nmeth988

Jana F Liewald, Martin Brauner, Greg J Stephens, Magali Bouhours, Christian Schultheis, Mei Zhen & Alexander Gottschalk

Optogenetic analysis of synaptic function

Nature Methods 5, 895 – 902 (2008) doi:10.1038/nmeth.1252

Oliver G S Ayling, Thomas C Harrison, Jamie D Boyd, Alexander Goroshkov & Timothy H Murphy

Automated light-based mapping of motor cortex by photoactivation of channelrhodopsin-2 transgenic mice

Nature Methods 6, 219 – 224 (2009) doi:10.1038/nmeth.1303

Zengcai V Guo, Anne C Hart & Sharad Ramanathan

Optical interrogation of neural circuits in Caenorhabditis elegans

Nature Methods 6, 891 – 896 (2009) doi:10.1038/nmeth.1397

Shengli Zhao, Jonathan T Ting, Hisham E Atallah, Li Qiu, Jie Tan, Bernd Gloss, George J Augustine, Karl Deisseroth, Minmin Luo, Ann M Graybiel & Guoping Feng

Cell type–specific channelrhodopsin-2 transgenic mice for optogenetic dissection of neural circuitry function

Nature Methods 8, 745-752 (2011) doi:10.1038/nmeth.1668

Adam M Packer, Darcy S Peterka, Jan J Hirtz, Rohit Prakash, Karl Deisseroth & Rafael Yuste

Two-photon optogenetics of dendritic spines and neural circuits

Nature Methods 9, 1202–1205 (2012) doi:10.1038/nmeth.2249

Hidehiko K Inagaki, Yonil Jung, Eric D Hoopfer, Allan M Wong, Neeli Mishra, John Y Lin, Roger Y Tsien & David J Anderson

Optogenetic control of Drosophila using a red-shifted channelrhodopsin reveals experience-dependent influences on courtship

Nature Methods 11, 325–332 (2014) doi:10.1038/nmeth.2765

Karl Deisseroth

Optogenetics

Nature Methods 8, 26–29 (2011) doi:10.1038/nmeth.f.324

Peter Hegemann & Andreas Möglich

Channelrhodopsin engineering and exploration of new optogenetic tools

Nature Methods 8, 39–42 (2011) doi:10.1038/nmeth.f.327

Simon Peron & Karel Svoboda

From cudgel to scalpel: toward precise neural control with optogenetics

Nature Methods 8, 30–34 (2011) doi:10.1038/nmeth.f.325

Monya Baker

Light tools

Nature Methods 8, 19–22 (2011) doi:10.1038/nmeth.f.322