Experiments fail to be reproduced, research data from others is hard to come by, and steps between data and figure are described as ‘here, a miracle happens’.

Speakers at the Publishing Better Science through Better Data (#scidata16) conference addressed these issues and more.

Publishing Better Science through Better Data journalism competition winner Réka Nagy.

Most research happens behind closed doors, and the results can only be gleaned once they’ve been published. The raw data that lead to results, however, are rarely made public, and the steps taken to get from data to figures in a publication is not always clear, which has led to the reproducibility crisis currently facing research. It’s clear that something needs to be done to address this, and the ever-inventive collective mind of science is finding inventive solutions.

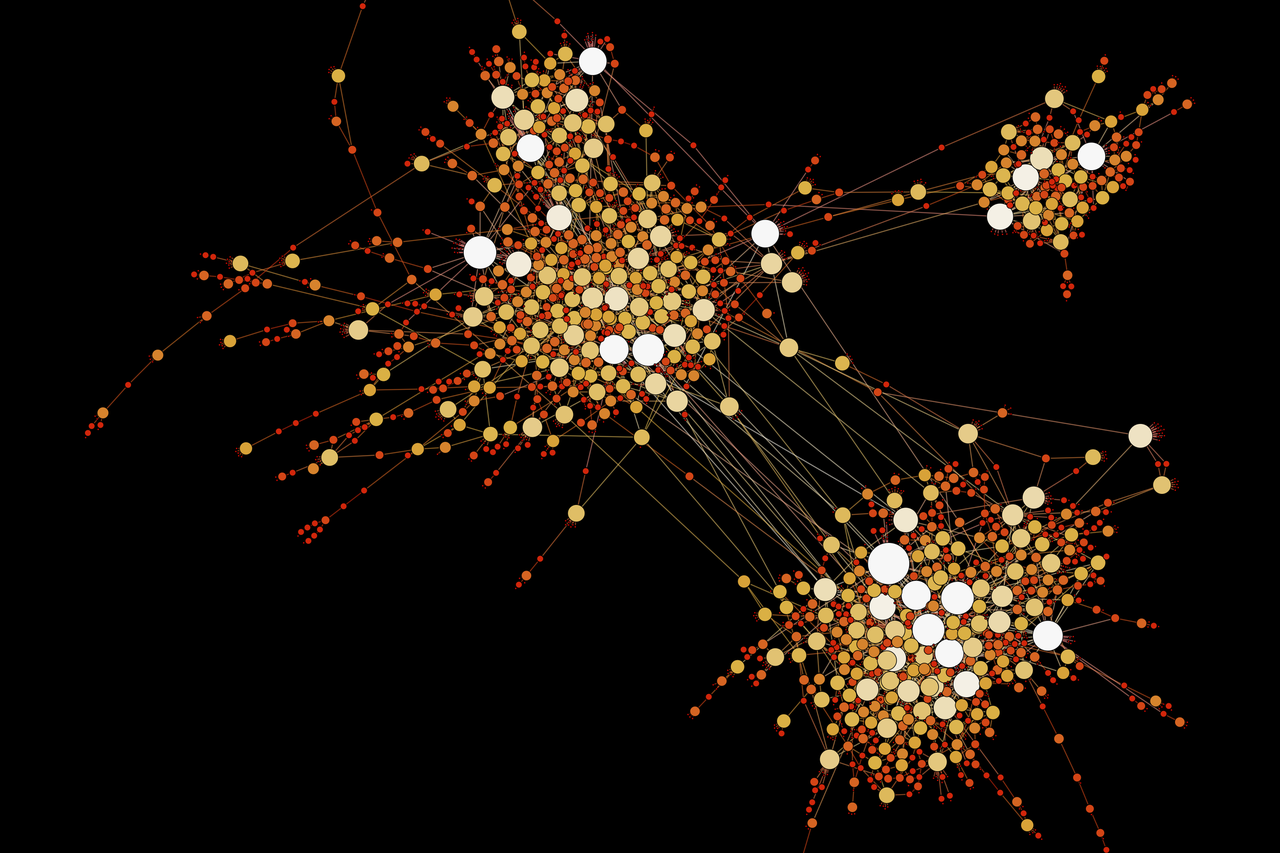

The steps taken to get from data to figures in a publication is not always clear {credit}SlvrKy/Wikimedia Commons CC-BY-SA-4.0 {/credit}

Florian Markowetz is a strong advocate of working reproducibly. In his papers, he provides raw data and programming that show how he gets from data to figure. With this information, anyone can re-run the whole analysis — members of his lab, reviewers, or anyone reading his paper, which makes the correction of mistakes easy, catalogues the whole analysis process, and allows other scientists to re-create the result files quickly and easily should the input data change.

This works really well when your entire analysis is done using one freely-available statistics program, and if you’re free and able to publish your raw data. But if your research involves many programs, some of which need a paid license, and your dataset is in the terabytes and not freely distributable, this approach starts hitting some barriers.

These hurdles don’t stop the average scientist from working reproducibly, though — by meticulously cataloguing experiments, and keeping track of changes made to data, scientists will know exactly how each other’s results were obtained and don’t have to start from scratch.

Rachel Harding, who is studying Huntington’s Disease at the University of Toronto, has been quick to grasp the benefits of sharing her research far and wide. She created Lab Scribbles — an online platform where she shares her work, rationale, methods and raw data. This helps her to keep track of the science, and lets others interact, collaborate and learn from her successes (and failures).

Sometimes, it’s not your own data that are the issue. You can be the most meticulous person ever, but if your research relies on others’ data, you can be in for a headache. Antica Čulina and Nathan Golightly have spent many sleepless nights searching for data they can use in their work. She needs to bring together information collected globally to gain deeper insights into her work on divorce in birds (yes, that’s a real thing). He compares the performance of machine learning algorithms to predict cancer based on freely available gene expression data. Finding these data is only the first challenge — when I pressed them during a Q&A session, they admitted to throwing away potentially valuable datasets because these were not properly annotated, contained the wrong data, or were in some arcane file format. “They made me doubt science a bit, made me doubt the quality of these studies”, says Čulina. They strongly advocate that people who share their data make it FAIR — findable, accessible, interoperable and reusable.

In addition to individual efforts to make data open and reproducible, there are examples of large-scale research initiatives doing the same, such as the Human Genome Project, or, more recently, the Exome Aggregation Consortium (ExAC). Anyone can download, modify, use and share their data, as long as they attribute it accordingly and keep any redistributed versions open. Wikipedia enjoys the same mentality of openness, which has also been translated to software development. Linux, for example, is open source — it can be freely modified and distributed by anyone.

The Montreal Neurological Institute has taken this one step further, making all research data and samples obtained at the institute freely available after publication (within the bounds of sample availability and patient confidentiality), and foregoing the pursuit of patents. Guy Rouleau, the mastermind behind this bold step, says that research should be used to accelerate science for the benefit of helping people instead of making money. He hopes that this will speed up drug discovery for the treatment of neurological disorders, and that other institutes follow suite.

There are still many challenges that need to be addressed before researchers and institutions adopt open, reproducible science. But ten years ago, the open data movement was barely learning how to crawl. Today, we’re hearing the starter pistol. In another ten years, we may see it run.

Réka Nagy is a PhD student at the MRC Institute of Genetics and Molecular Medicine at the University of Edinburgh, unravelling how genetics shapes our health using large family-based datasets. When she’s not busy writing scripts and analysing data, she can be found communicating science or using a computer to play video games and design anything from posters to dream homes. You can find her on LinkedIn and Twitter.

You can access all the slides and videos from Publishing Better Science through Better Data 2016, as well as the great visual summary of the day, on the event website.

Suggested posts

The era of big data is coming: Scientists need to step out of their comfort zone

Why should we work so hard to make our work reproducible?

What are the benefits of reproducibility in science?