Alberto Cairo responds to a Correspondence criticising the use of storytelling techniques in scientific research articles and journalism.

Nature Methods’ August Points of View article by Alberto Cairo and Martin Krzywinski described how to use techniques of storytelling to design better scientific figures. That article prompted a passionate response from Yarden Katz arguing that storytelling has no place in scientific articles. Cairo and Krzywinski respond that their article was overinterpreted. This exchange prompted us to argue in the November Editorial that storytelling serves an important role when used properly.

In this guest post, Alberto Cairo expands on their printed response.

Yarden Katz’s thoughtful response to our short column about visual storytelling techniques in science communication makes many cogent observations. We will use them as a starting point for a deeper discussion of the contents of the column itself.

Yarden Katz’s thoughtful response to our short column about visual storytelling techniques in science communication makes many cogent observations. We will use them as a starting point for a deeper discussion of the contents of the column itself.

First of all, Katz sees too much in our words. As explained in our published response to the Correspondence by Katz, we didn’t advocate for the use of storytelling to drive experiments. That is a very legitimate concern, but it was not our goal to promote this idea, so we won’t comment further on it.

Second, Katz presents an incomplete image of what storytelling and journalism are. He says that “great storytellers embellish and conceal information as necessary to evoke a response in their audience. Inconvenient truths are swept away while marginalities are amplified or spun to make a point more spectacular.” This is a rather bold claim that may be guilty of the same malady it denounces. It highlights the worst and obscures the best to be emotionally powerful.

It is true that many journalists begin with a preconceived idea—a narrative structure—and then choose the data which better fit it. They cherry-pick evidence to make a stronger and clearer point. They magnify outliers without mentioning the overwhelming prevalence of average values. This is the problem Christopher Chabris has identified in the work of famous journalist Malcolm Gladwell, in a recent long article (1).

This is not the approach we were trying to explain in our column. Proceeding this way, as Katz wrote, is wrong, and it is as wrong in science as it is in journalistic storytelling.

Moreover, we would like to remind Katz that there’s a long-rooted tradition in journalism that tries to stick to standards of truth which are close to those used in science. It was defined forty years ago by professor Philip Meyer, from the University of North Carolina at Chapel Hill, as “precision journalism”. Precision journalism consists of the use of social science research techniques in news reporting: Surveys, statistics, data analysis, visualization, etc. In the best of the worlds, all journalism should be based on a careful evaluation of data and evidence, but precision journalism tried to elevate the standards of what proper evidence really is, even considering the pressures and tight deadlines journalists need to endure and meet.

That tradition has mutated into different branches of journalism that overlap greatly: Computer-assisted reporting (CAR), and data-driven journalism (2) among them. The most famous exponent of this tradition nowadays is Nate Silver, author of the blog FiveThirtyEight who, using mainly Bayesian techniques, correctly predicted the results of several elections (3).

What is the method of journalists—storytellers—in these areas? They don’t pitch an idea and then try to find the best data to support and embellish it. Ideally, they may begin with a fuzzy notion of what they want to focus on, and then they collect evidence systematically and let stories emerge from it. These stories may be completely opposite to the notion they had in mind at the beginning. Finally, they write those stories or, as we suggested in our column, they visualize them, in many cases with the close advice of experts in the areas they are covering (4). This is the storytelling tradition we were thinking about when writing our column.

Another point that we made is that the techniques described are helpful mainly when researchers need to communicate with non-specialized audiences. Journalists and storytellers are aware that people cannot absorb large amounts of information at once, and that in many cases they lack the background necessary to understand complex scientific research. As we wrote, “inviting readers to draw their own conclusions is risky because even simple messages can hide in simple data sets.”

However, and this is a critical point, nothing impedes researchers or journalists to present two or more competing interpretations when they are equally founded on evidence or there’s great uncertainty. Or to first present their main conclusions in the form of an evidence-based visual story, a narrative or, at least, a compelling composition—not all information can be framed as a story, after all—and then let those readers interested in exploring the multiple nuances or angles of an investigation access the data gathered and analyzed for it. This is something data journalists do today (5).

Any of those approaches would help avoid the challenge correctly pointed out by Katz: “complex experiments afford multiple interpretations and so such deviances from the singular narrative must be present somewhere.” Indeed. Just not at the first level of the presentation. To communicate effectively, information needs to be layered and sequenced in a way that can be processed correctly by audiences (6) while respecting all its nuances. For good examples of journalistic work that is both engaging and evidence-based, see the books by David Quammen, Carl Zimmer, or David Dobbs.

And it’s not just journalists who embrace this particular kind of storytelling technique. Many scientists do, too. As a recent example, take Michael E. Mann’s The Hockey Stick and the Climate Wars: Dispatches from the Front Lines, a book that presents the evidence for global warming in the form of a narrative that is deep, rich, and captivating at the same time.

I’d like to conclude by quoting the words by the Yale University professor Robert P. Abelson that we included in our column. In his most popular book, Statistics as Principled Argument (1995), Abelson wrote that he used to ask his students “If your study were reported in the newspaper, what would the headline be?” That doesn’t mean that this headline is the only element that should be reported. Rather, it means that it should be the first element to be reported, followed by a discourse based on—to borrow Katz’s beautiful description—”evidence and arguments that are used—with varying degrees of certainty—to support models and theories.” This would be a discourse that is interesting to read and that thoroughly respects the integrity and the complexity of the underlying data. Therefore, we believe that storytelling, if carefully handled, can be compatible with the framing for presenting scientific results Katz outlines.

Footnotes

(1) See https://blog.chabris.com/2013/10/why-malcolm-gladwell-matters-and-why.html

(2) The academic literature in communication studies and journalism has not reached an agreement on how these categories should be defined. Basically CAR focuses on the use of data and databases to inform traditional reporting work (writing and speaking). Data-driven journalism expands the scope to include also the design of tools for readers to explore data, such as visualizations, mobile apps, etc.

(3) Silver’s blog used to be hosted by The New York Times. It has recently moved to ESPN.

(4) Journalists are, by tradition and training, jack-of-all-trades, even those who specialize in research, statistics, and computing.

(5) ProPublica and Texas Tribune, for instance, are two independent, non-profit investigative journalism organizations which frame their projects as stories, but then they usually let readers access the databases they put together and analyzed.

(6) Multiple recent books warn against the dangers of storytelling, cognitive biases, and patternicity, the tendency to see patterns where none exist. Arguably, the most popular ones are Kahneman (2011) and Shermer (2012). However, both authors also concede that we humans love stories, and we understand complicated information better if it can be presented as a story. So why not take advantage of that feature if we are conscious of its possible shortcomings?

REFERENCES

Abelson, Robert P. (1995) Statistics as Principled Argument. Psychology Press.

Kahneman, Daniel (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux

Mann, Michael E. (2012) The Hockey Stick and the Climate Wars: Dispatches from the Front Lines. Columbia University Press.

Meyer, Philip (1973). Precision Journalism: A Reporter’s Introduction to Social Science Methods. Indiana University Press.

Shermer, Michael (2011). The Believing Brain: From Ghosts and Gods to Politics and Conspiracies—How We Construct Beliefs and Reinforce Them as Truths. Times Books.

Silver, Nate (2012). The Signal and the Noise: Why So Many Predictions Fail — but Some Don’t. Penguin Press.

Yarden Katz’s thoughtful response to our short column about visual storytelling techniques in science communication makes many cogent observations. We will use them as a starting point for a deeper discussion of the contents of the column itself.

Yarden Katz’s thoughtful response to our short column about visual storytelling techniques in science communication makes many cogent observations. We will use them as a starting point for a deeper discussion of the contents of the column itself.

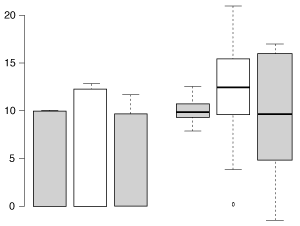

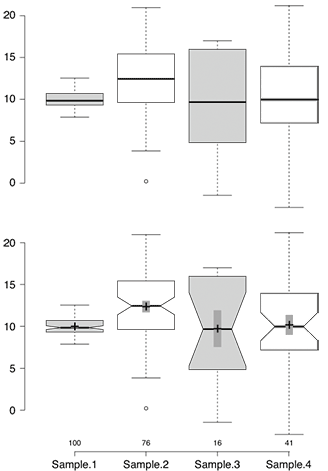

Elements of a figure

Elements of a figure