This guest blog comes from Ritu Dhand, VP Editorial, Nature Journals.

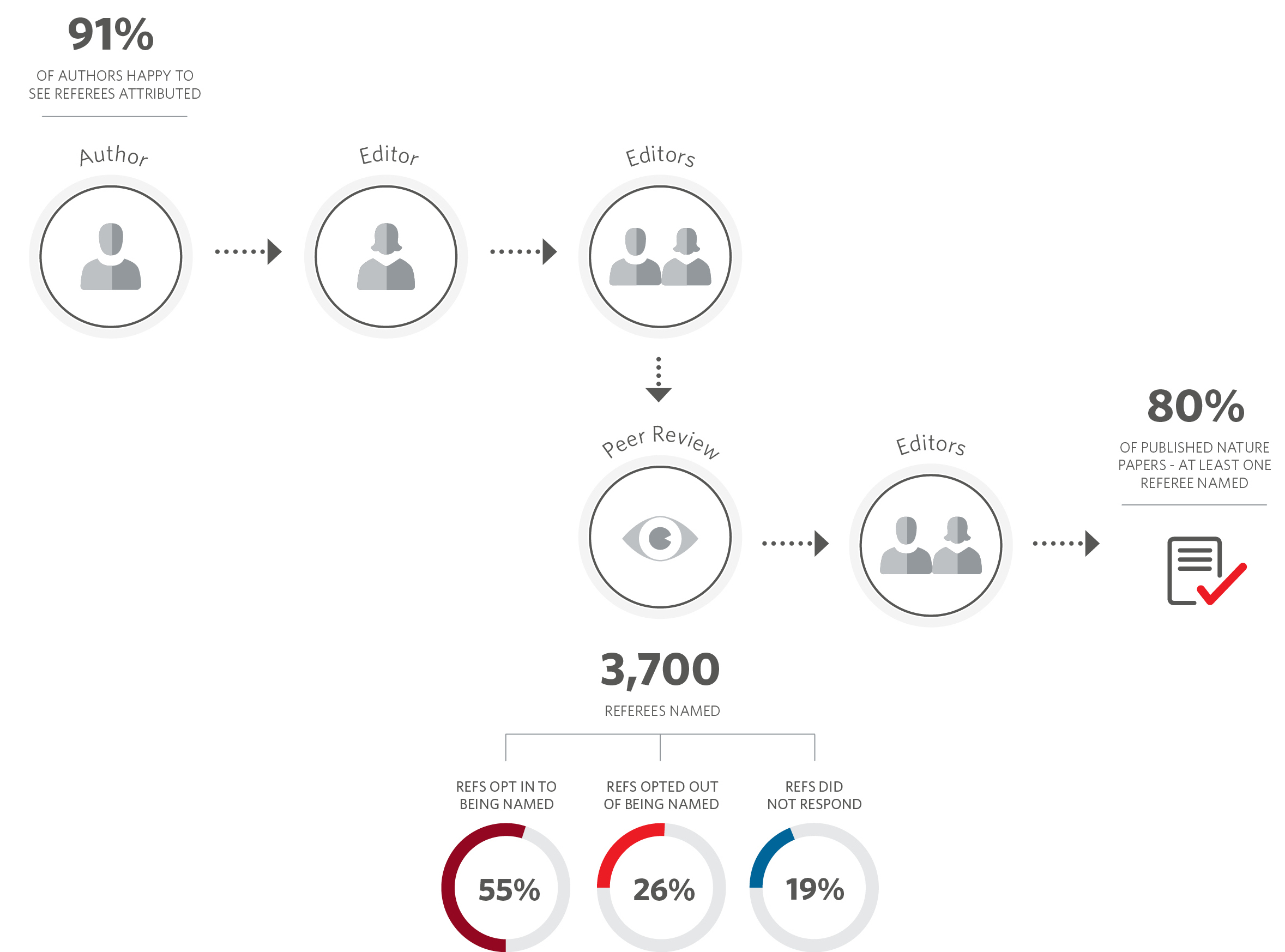

Nature’s trial to formally acknowledge the contribution of its peer reviewers by naming them on published papers with permission of the referee and author has been expanded to eight more Nature Research journals. 55% of referees opted in during the initial phase.

The Nature-branded journals publish over 8,000 primary research papers each year. Behind each paper is a talented team of reviewers who have helped our professional editors to assess the scientific claims being made. Peer review is the formal quality-assurance mechanism whereby research manuscripts are subjected to technical evaluation and assessment of impact, and is a cornerstone of quality, integrity and reproducibility in research. However, most reviewers receive little recognition for their efforts. Given how highly we value the contributions of our reviewers, we wanted to give them an option to be formally and publicly recognised for their role in the peer-review process.

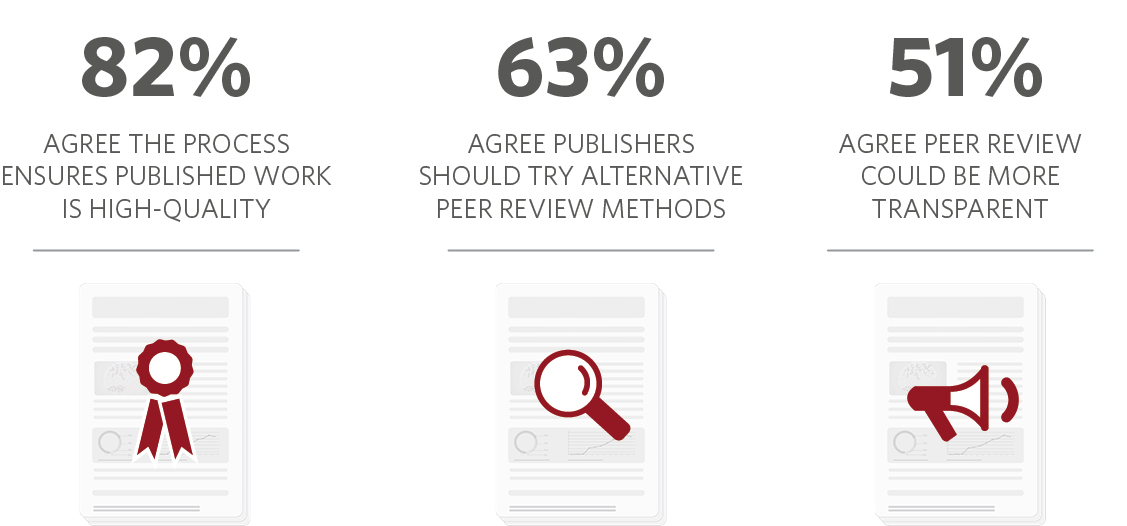

We have also been exploring ways to introduce transparency to the peer review process. For several years, researchers have argued that single-blind peer review, where the referees are unknown to the author, is sub-optimal. The lack of transparency means researchers must have confidence that referees and editors are acting with integrity and without bias. In a survey with responses from 1,230 Nature referees, 82% agreed that the traditional peer review process is effective in ensuring work that is published is high-quality. Yet 63% of respondents also agreed that publishers should experiment with alternative peer review methods, and 51% agreed that peer review could be more transparent and that they expect publishers to do more.

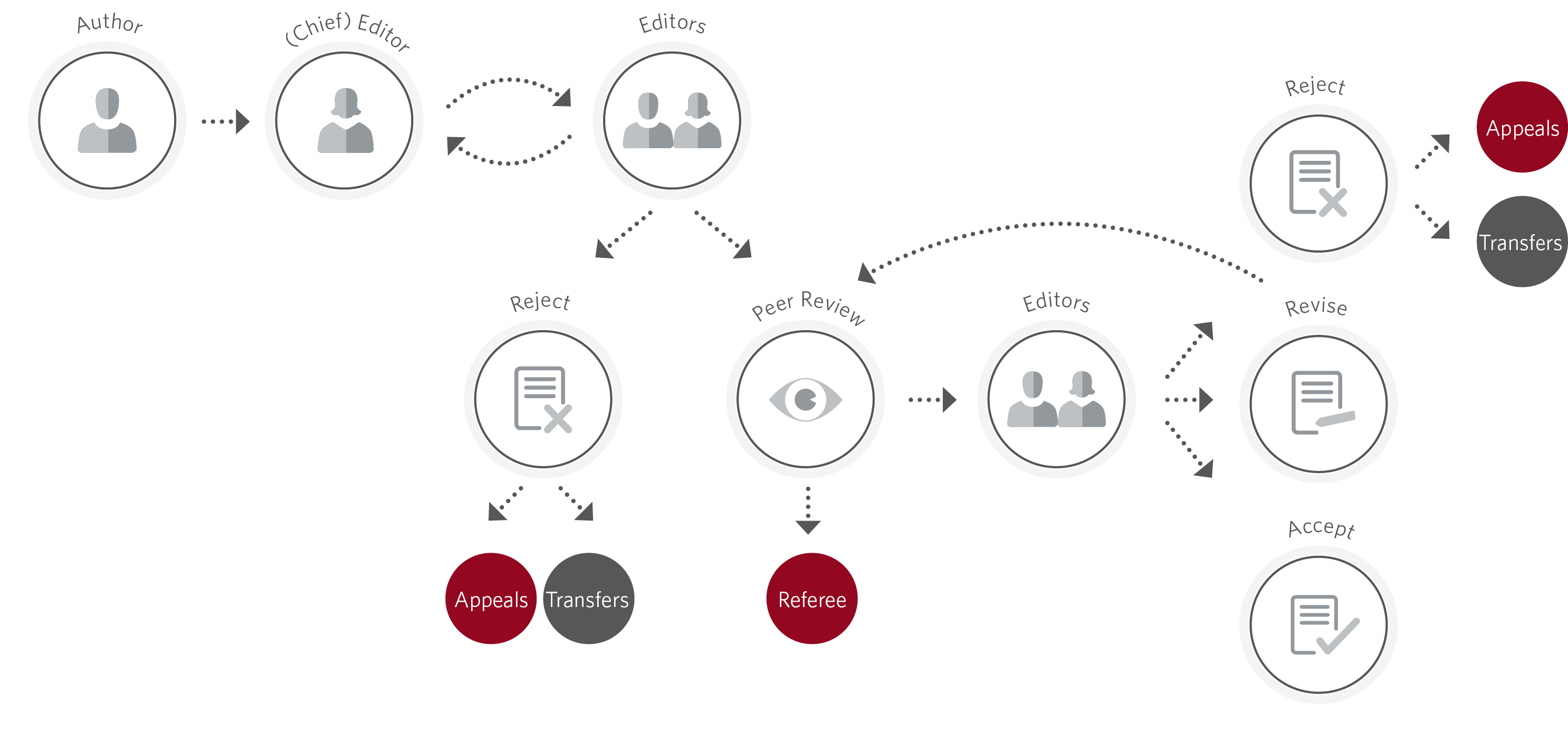

As a way of both acknowledging the work of reviewers and introducing transparency to the peer-review process, we launched our referee recognition trial at Nature in spring 2016. At the end of the peer-review process, authors and peer reviewers are given the option of having referee names formally acknowledged on the published paper. If the authors also agree, peer reviewers who give permission will have their name included in the ‘reviewer information’ section of the paper where we thank them for their contribution. In some cases, some referees on a paper may choose to have their name listed, while others may choose to remain anonymous.

Over the last three years, around 3,700 Nature referees across the natural sciences have chosen to be publicly recognised and around 80% of Nature papers have at least one referee named. We have not seen any significant differences in behaviour between researchers in the life and physical sciences. 91% of Nature authors opted in to the trial, while among referees, 55% opted in (26% opted out and 19% did not respond). When surveyed, 80% of referees that had participated in the Nature referee recognition trial said they would be happy to be named again. The Nature reviews journals also rolled out the referee recognition trial one year ago and saw 57% of reviewers opting in to be publicly named. More recently, we have rolled out the trial at eight Nature Research journals: Nature Astronomy, Nature Climate Change, Nature Nanotechnology, Nature Neuroscience, Nature Physics, Nature Plants, Nature Protocols and Nature Communications.

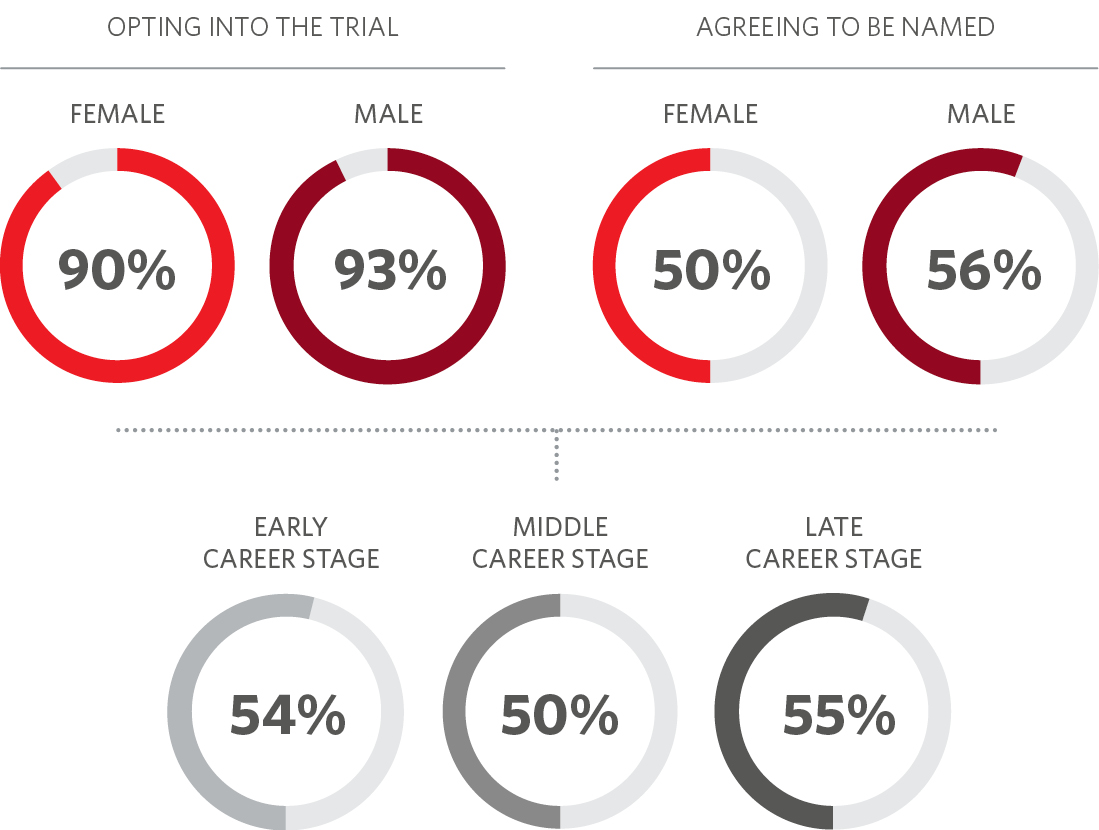

We analysed the gender and career stage of authors and referees who took part in the trial over a nine-month period (where these data were available in our peer review system or could be cross-checked with public sources). The percentages of female and male corresponding authors opting into the trial were similar: 90% and 93%, respectively. The proportion of female and male referees who agreed to be named was also similar: around 50% and 56%, respectively. A similar proportion of referees from early, middle and late career stages were happy to be named: 54% of researchers/post-docs, 50% of assistant/associate professors, and 55% of professors opted in to the trial.

We also surveyed all reviewers who had reviewed for our journals in the course of one year to better understand their motivations for participating in the peer-review process and their views on peer review more broadly. Altruism was a key driver of participation in peer review. 87% of researchers who responded said they considered it their academic duty to peer review and 77% said that participating would help to safeguard the quality of published research. Conversely, only 6% of reviewers noted that participating in peer review enhanced their CV and 7% said it encouraged favourable views from editors. Unsurprisingly, most reviewers (94%) said that the subject area is a key factor when deciding which manuscripts to review.

Despite the time and effort peer review requires, 71% of respondents did not expect acknowledgement for peer review and 58% thought that rewards may compromise the review procedure. However, when asked from whom would they most value recognition, 44% said they would most value recognition for peer review to come from publishers or editors.

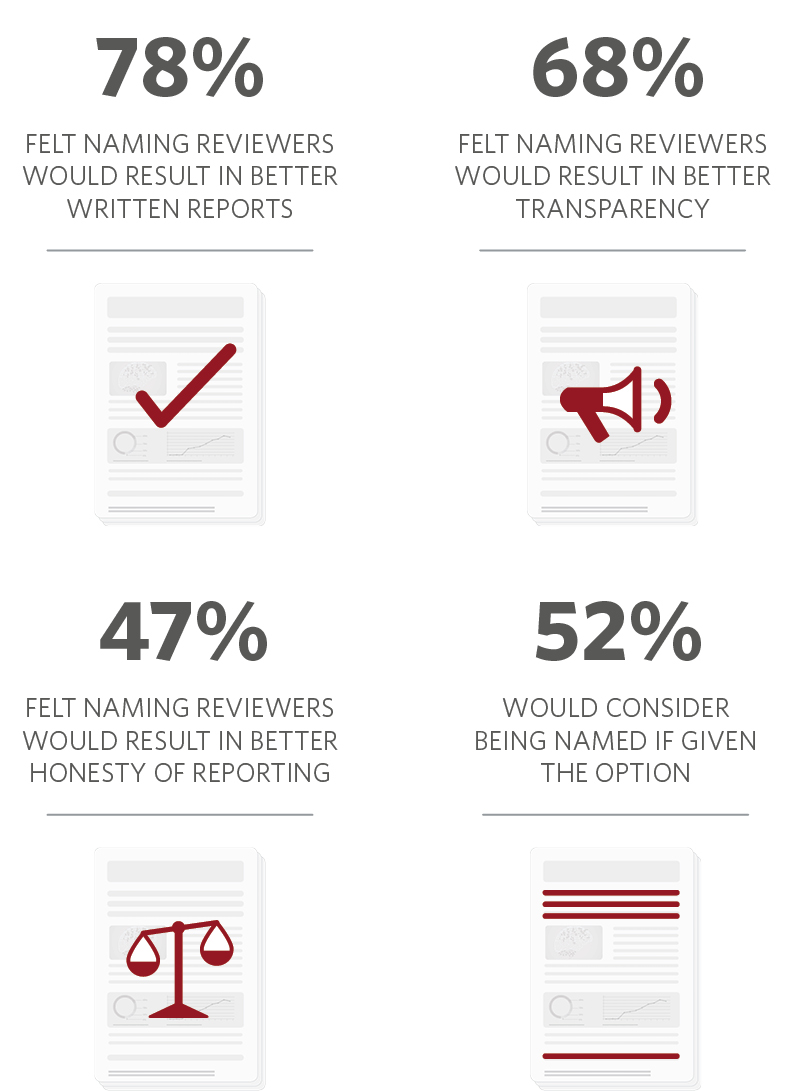

We also asked reviewers what they thought the impacts of public referee recognition might be. 78% felt that naming the reviewers would result in better written reports; 68% thought it would have a positive impact on transparency; 47% thought it would have a positive effect on honesty of reporting; and 52% of those who had not been formally acknowledged by a Nature-branded journal indicated that they would consider being named if given the option.

About a quarter of researchers opted out of the trial and appeared to be against the principle of referees being named on published papers. Their concerns mainly focused around the practice could increase the chances of the system being gamed by individuals — perhaps starting a ‘you owe me’ mechanism — or referee reports being toned down, either to avoid upsetting or from fear of retaliation from disgruntled authors, particularly those in senior positions. Many of these researchers believe that peer review should always be confidential and are against this level of transparency. For these reasons, referee recognition remains optional on the Nature-branded journals.

To see so many of our referees choosing to be named is a reflection of the changing attitudes towards peer review. We are happy that we can publicly acknowledge the contribution to peer review of so many of them. We continue to listen to the community and acknowledge the call for further consideration of other ways to do peer review. Nature Communications has been publishing referee reports for over three years, and we are discussing whether offering this as an option on other Nature Research journals is something we can practically consider in the future. Watch this space for further information!

A related Nature editorial is also available here: Three-year trial shows support for recognizing peer reviewers